Early in paper #2 in this series, I made a reference that it would be the only paper in the third offset strategy (3OS) series with a table of contents. That turned out to not be true; this paper, while only about ⅓ the length of that one, still necessitates it, as changing the doctrine, policy and many tactics, techniques and procedures (TTPs) for both the Department of Defense (DoD) and in particular, the United States Air Force (USAF) is probably too long to write in a single narrative that fits well.

So, warning up front: reading this entire thing in one sitting probably results in a few points being made over and over (and over!) again because I may mention them in multiple sections. That's not an accident; it's by design so each of the fifteen sections in this paper can be viewed in a vacuum and make sense, but I didn't feel like publishing fifteen separate blogs for part 6 of the 3OS series.

- Executive Summary

- 1) Operationalizing Information

- 2) Doctrine & TTP: The Software-Centric Loop

- 3) Fractal Airpower & Cheap Mass (sUAS)

- 4) DIME & Hybrid Warfare Strategy (Exploit Their Vulnerabilities)

- 5) OODA Across the Enterprise (Acquisitions, Research, Development, Test, Evaluation and Sustainment)

- 6) Swarm-on-Swarm, Directed Energy & Micro-EMP

- 7) Million-Plane Air Force & CCA/Replicator

- 8) The Sixth-Gen Airman (Roles Sunset, AI Integration)

- 9) Dynamic Basing Realities (ACE, but Real)

- 10) Organizational Design: DIU Elevation, New MFPs

- 11) Workforce & Career: Pathfinders + a Gig-Style Economy (and the New WepTacs)

- 12) ATO Revolution: RMF vs. STPA vs. ARCOS

- 13) Data as a Strategic Asset (JADC2, CDAO)

- 14) Five Unorthodox Case Studies

- 15) Implementation Roadmap & Metrics

- Conclusion

- References

Executive Summary

The Department of Defense faces an urgent dilemma: adversaries are fielding adaptable, low-cost technologies faster than our acquisition system can respond. This paper argues for a fundamental shift in posture—away from exquisite, slow-moving programs toward software-defined, contractor-powered, and effects-driven force design. The thesis is simple: victory in the next conflict will not come from the most advanced single platform, but from the force that learns and adapts fastest.

Key Concepts:

- Software-Defined Warfare: The central thesis is that victory in future conflicts will be determined not by the superiority of individual platforms, but by the speed at which a force can learn, adapt, and deploy new capabilities. This requires a shift towards a software-defined, effects-driven force design.

- Time as the decisive currency: Shifting measures of effectiveness from platform counts to loop speed: time-to-patch, time-to-field, and time-to-data.

- Information as a Weapon: The article posits that the U.S.'s strategic advantage lies in its information economy. Data, software, and networks should be treated as primary maneuver elements, not just as enablers for hardware.

- Software as the arsenal: Integrating the civilian technology base through continuous authority to operate pipelines, Modular Open Systems Approach, and reciprocity by design.

- Mass and autonomy: Scaling Collaborative Combat Aircraft and swarms of small unmanned aerial systems to overwhelm adversaries, guided by cost-per-effect doctrine.

- Acquisition Reform: The author advocates for a complete overhaul of the acquisition process to match the speed of software development. This includes the widespread adoption of continuous Authority to Operate, which allows for the rapid and secure deployment of new software and technologies.

- Resilient networks: Agile Combat Employment that treats command-and-control and data survivability as its primary weapons system.

- Organizational redesign: elevating Defense Innovation Unit with its own Major Force Program, aligning US Cyber Command's mandate with portable funds, and embedding new billets (Integration & Interoperability Manager, Software Design & Development Supervisor, Contractor & Vendor Relations) as metabolic elements of future squadrons.

- Organizational Redesign: The article proposes significant changes to the Department of Defense's organizational structure, including elevating the Defense Innovation Unit and creating new software-focused roles within squadrons.

- Civilian-Military Integration: The author emphasizes that the civilian software market is the U.S.'s true arsenal and calls for closer collaboration with contractors.

The takeaway: The Department of Defense must rewire its system economics—acquisition, budgeting, training, and operations—around speed, software, and survivability. Commanders must maneuver software like they maneuver aircraft or armor. Those who learn faster will win.

1) Operationalizing Information

The U.S. wins when we align doctrine and dollars to what our economy actually produces at scale: information, software, and networks—not just metal and composites. I’ve been building this case across Parts 1–5, but let’s state it plainly up front: the strategic advantage is no longer a single exquisite platform; it’s the institutional metabolism that turns data into decisions, code into combat power, and telemetry into faster learning than any adversary can match. That’s the 3OS translated into a software-first economy—policy and practice that behave like our best digital firms, not our slowest programs of record.[1],[2],[3],[4]

In Part 1, I argued that our force design still treats software as “enablement” around a hardware core. That lens is backwards. Data, models, and code are maneuver elements. They are taskable (“push this model to these squadrons by 1400”), targetable (adversary will try to corrupt, exfiltrate, or deny them), and protectable with the same seriousness as fuel, munitions, and runways. When we elevate them to first-class operational objects, we get better options at lower cost and higher tempo. That reframe is already latent in our policy stack—DoD's Data Strategy, Zero Trust and Cybersecurity Framework (CSF) 2.0, and zero trust architecture (ZTA)/risk management framework (RMF) guidance—but we haven’t fully operationalized it at the unit and wing level.[3],[5],[6],[7] We need to view “data as ammunition” which of course changes planning: you don’t just plan sorties; you plan data flows, model updates, and application programming interface (API) contracts as part of the scheme of maneuver.

From that premise, a few consequences fall out.

First, replace platform-centric planning with software-centric force design. Tactics and techniques live in code and telemetry instead of on a slide deck labeled “CONOPS.” It means you iterate. You observe a failure mode on Monday, adjust a classifier or control law on Tuesday, deploy to a canary group on Wednesday, and fly the TTP on Thursday—capturing real-world performance signals the entire time. That’s the DevSecOps playbook, not a metaphor: pipelines, tests, versioning, rollbacks, and automated compliance vaults. We already have the scaffolding—Software Modernization Strategy, DevSecOps Reference Design, and the Software Acquisition Pathway—but we’re underusing it as if it were a compliance exercise rather than the main effort.[4],[8],[9],[10] In practice, a “software-centric force design” means standing up a Software Bill of Tactics (SBOT) for each mission family and treating it like the technical order that it is—only faster (I’ll detail SBOT in later sections).

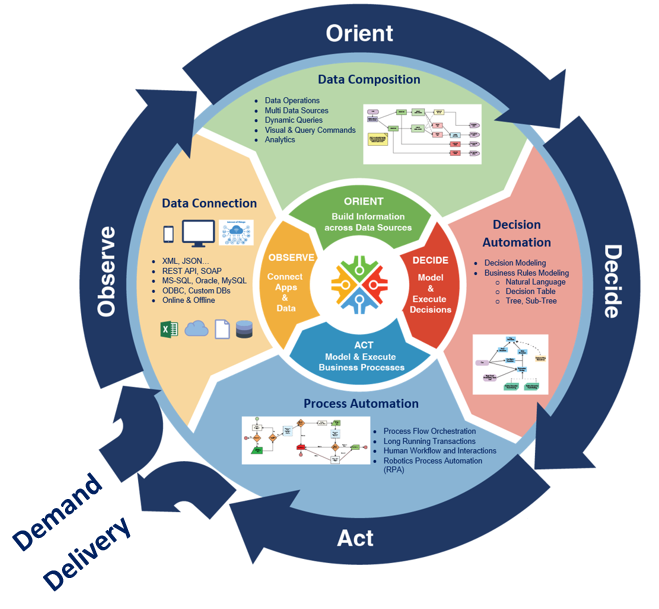

Second, push acquisition clocks to match DevSecOps cadence. In a white paper[11] my co-authors and I contrasted RMF's governance strengths with hazard-based engineering like System-Theoretic Process Analysis (STPA) and the Defense Advanced Research Project Agency's (DARPA's) Automated Rapid Certification of Software (ARCOS). The point wasn’t to pick a winner; it was to re-time the system. We need continuous delivery, continuous authorities to operate (cATO), continuous test. We need faster tools to acquire dual-use technologies and onboard them in days, not months. That is not sloganeering; it’s an architecture and a set of authorities. Pre-approved pipelines with inherited controls. Automated evidence. Live risk scoring pinned to common vulnerabilities and exposures (CVEs), software bill of materials (SBOMs), and mission hazard analysis so authorizations are a stream, not a gate.[4],[8],[11],[12],[13],[14],[15],[16] When we do this right, the “decision to field” is often a decision to advance a progressive rollout percentage, not a two-year milestone review. Test is telemetry. Certification is continuous. The longer we wait to make this the default, the more we subsidize the attacker’s observe, orient, decide & act (OODA) loop.

Third, elevate cyberspace from incident response to persistent campaign. If you’ve read Part 2 and Part 5, you know where I land: our adversaries already operate as persistent actors shaping terrain before crisis—pre-positioning in critical infrastructure, living-off-the-land, and iterating their own playbooks against our seams.[17],[18],[19] Treating cyber as a ticketing queue “after an event” is like treating air defense as “we’ll scramble after the crater.” Campaigning in cyber and info space is not optional hygiene; it’s how you set theater conditions for every other domain. That means defend-forward with allies, hunt-forward as a normal muscle movement, and—crucially—closing the loop so those ops continuously harden the code and data pipelines that our warfighting depends on (we’ll come back to this under Joint All-Domain Command & Control (JADC2) and data contracts).

Fourth, make budget artifacts pay for usable code and telemetry—not milestone theater. We don’t get the behaviors we admire; we get the behaviors we budget. If planning, programming, budgeting & execution (PPBE) and major force programs (MFPs) reward PowerPoint maturity and paper risk burndown, we’ll get exactly that. The PPBE Commission already pointed to outcome-based measures; the Office of Management and Budget's (OMB's) A-11 and DoD Financial Management Regulation (FMR) give us the levers to translate delivered, running software—and retired tech debt—into real execution credit.[20],[21],[22] Concretely, that means adding budget-relevant, audit-defensible counters for: time-to-patch for CVE-listed vulnerabilities; time-to-field for middle tier acquisition (MTA)/urgent operational need (UON/JUON) pathways; time-to-decision (T2D) against JADC2 objectives; and cost-per-effect for collaborative combat aircraft (CCA)/small unmanned aerial system (sUAS) packages. When a program kills stale code or decommissions a dead interface, that should accrue the same reputational and financial wins as drawing down obsolete hardware. Deprecation is not failure—it’s vigor.

What does this look like at squadron scale? Imagine a fighting wing where every mission thread has:

- A declared data contract (publishers, subscribers, latency, service level objectives (SLOs), decision rights)

- A cATO pipeline with inherited controls to push model and software deltas on cadence

- A telemetry backbone where operational test & evaluation (OT&E) is not a separate phase but a standing fabric. Ops generate data → telemetry flows into the SBOT repo → tests run → a model patch or feature flag shifts behavior → new TTP emerges. Days, not program objective memoranda (POM) cycles. This is not sci-fi; it is ordinary in the best software shops and fully supported by existing DoD policy—if we operationalize it.[3],[23],[24],[25],[26],[27]

What changes for commanders? Two muscles:

- Ask for software effects like fires

- Treat APIs like terrain

A commander should be as comfortable saying “I need a model patch to tighten target classification thresholds in sector Bravo within 48 hours” as “fire for effect.” And staff should be able to publish an API fragmentary order (FRAGO) that adds a subscriber and changes a schema field with rollback baked in. That’s what modular open systems approach (MOSA)/future airborne capability environment (FACE)/universal command and control interface (UCI)/Command, Control, Communications, Computers, Cyber, Intelligence, Surveillance & Reconnaissance (C5ISR) & electronic warfare (EW)'s Modular Open Suite of Standards (CMOSS) are trying to enable at system scale; we need to normalize it at operational tempo.[28],[29],[30],[31] When the interfaces are the terrain, the team that can change the map fastest wins.

What changes for the commander? We divorce the approval process for the authority to operate (ATO) from an un-involved cyber professional who is divorced from the tactical fight, and instead align it like any other professional function as a supporting role. The authorizing official (AO) should be providing the commander an assessment of the cyber risk for a given piece of software—whether it be bespoke custom-made software from a mission design series (MDS) aligned software factory or dual-use software acquired through an MTA vehicle—and the commander, who actually has to do real mission risk assessment should then weigh the risks of cyber vulnerability against the risk of mission failures from lack of software support. This is no different than how a comms officer in the 6 shop will advise on the use of frequency hopping vs. fixed frequencies for a mission and what the relative risks are. Currently, we empower AOs to have absolute power over the employment of software who have only the faintest idea of mission risk when they say no. And saying no is something they are overtly incentivized through numerous channels to do.[11]

“But what about the big things?” Hardware still matters—airframes, munitions, depots—but their decisive edge now comes from the software and data woven through them. The platforms don’t go away; they get out-learned or out-updated. The 3OS vision only delivers if we bias the whole enterprise toward learning speed. That means two more institutional shifts that will echo through the rest of this series:

- Governance that accelerates. We will use RMF for what it’s great at (traceability, accountability), but shift assurance left into design safety (STPA/ARCOS) and right into runtime (live controls, kill switches). The cATO “by design” posture is a commander’s safety instrument, not a waiver path.[4],[8],[11],[12],[13],[14],[15],[16] Continuous RMF (cRMF) is not easier than RMF; it's much harder. But it increases warfighter lethality and it is a lot cheaper for the US taxpayer than what we do now. When serving the lethality of the force and the taxpayer can both be done better, we owe it to ourselves to do the hard work to implement the better solution.

- Campaigning that compounds. Cyber and information ops should continuously harden our SBOT and data contracts while raising adversary costs. Hunt-forward is not a press release; it’s an ingest that updates our models and signatures weekly.[18]

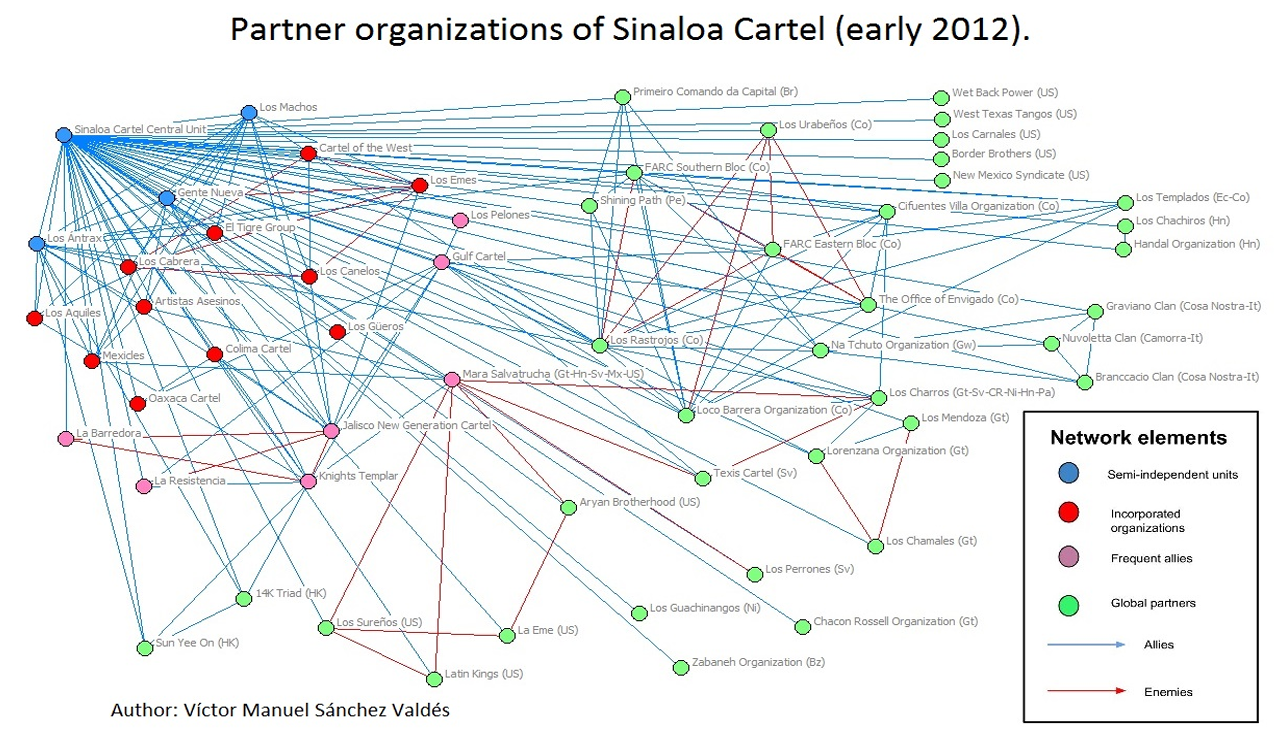

If this feels demanding, good—it is. But it is also coherent with where we already said we’re going in strategy documents. The gap is not intellectual; it’s operational. In Part 1 (“Redefining the Third Offset Strategy”), we argued for a software-first force that treats information, code, and networks as the primary sources of combat power. In Part 2 (“An Assortment of Problems”), we mapped the adversary set—Russia, China, Iran, North Korea, Venezuela, terrorists, and narco-cartels—and showed how each exploits hybrid tools (cyber, finance, information, proxies) to grind us down. In Part 3 (“Three Hammers”), we demonstrated how acquisition policies aren't just a delay, they are in fact a strategic risk to the security of the United States. In Part 4 (“Why a Peanut Butter Sandwich Is More Deadly Than a Nuclear Weapon”), we argued the entire strategy and tactics process needs to change. In Part 5 (“Tactical Cyber: Why the Model of Sacrificing All Victories for Strategic Illusion Never Works”), we shifted from ticket-driven incident response to persistent, allied campaigns. Section 1 of this paper is the bridge: put those behaviors at the center of doctrine and budget, not on the margins.

So the thesis is simple: operationalize information. Treat data, models, and code as maneuver elements. Replace platform-centric planning with software-centric force design. Retime acquisition to the pace of DevSecOps. Elevate cyberspace to a persistent campaign. Pay for outcomes measured in running software and telemetry.

If we do this, our airpower becomes fractal, our decision cycles compress, and our learning outruns adversaries who still believe victory is a line item of steel. If we do not, the fastest coder in the fight will write our future for us—and we will be left briefing PowerPoint to a war that has already moved on.

2) Doctrine & TTP: The Software-Centric Loop

Bryon Kroger and Enrique Oti famously opined when they ran Kessel Run that they intended for the Air Force to—and I’m paraphrasing—become a software organization that happens to fly planes.

There’s myriad reasons why that is not going to happen. Based on the Kessel Run post-mortem, the biggest being that “the cavalry isn’t coming.” But that doesn’t mean the TTP adaptation shouldn’t happen to work in a circumstance where software-centric operations aren’t the norm. This is that playbook.

Our doctrine has to assume that software—not metal—is the fastest maneuver element. Now we must codify a loop where operations change code and code changes tactics—on purpose, on a clock, and at scale.

It's now the loop we’re standardizing.

The loop is simple to say and hard to live: ops → telemetry → code change → deploy → new TTP. The unit of work is no longer a platform upgrade or a yearly tactics manual—it’s a small, auditable change set to an interface, model, rule, or visualization that measurably improves the mission. Pipelines (DevSecOps), not PowerPoint, carry those changes forward.[4],[8],[10] Every sortie, exercise, and watch floor becomes a data-gathering event; every sprint becomes a tactics update; every release carries its own rollback and hazard analysis.

That means:

- Telemetry by default. Instrument mission threads the way we instrument web services: golden signals, structured logs, and labeled data that survives contested links. No data, no test.

- Trunk-based development with feature flags. New behaviors ride behind flags, can be canaried to one cell or one CCA element, and rolled back without drama.

- Small diffs, documented effects. A commit message that says “re-tuned jammer geofence; +7% track continuity under global navigation satellite system (GNSS) spoofing” is a tactics entry.

This is not theory. We already run parts of it inside existing authorities; the work now is to make it doctrine.

From platform FRAGOs to API FRAGOs

Our FRAGOs still assume platforms. But effects now chain across APIs and data contracts, not just radios. Issue API FRAGOs: short orders that publish the interfaces and schemas others must honor to plug into a mission thread. The point is speed: when the schema is the order, the ecosystem can comply in hours, not weeks.

API FRAGO, example (abridged):

- Thread: Targeting → CCA swarm → effects

- Change: TargetMessage.v4 → v5 (adds EWConfidence, deprecates SensorID)

- Publisher: C2 cell JADC2/Targeting@AOR-W

- Subscribers (required): CCA Mission Computer, sUAS EW payloads

- SLA: ≤250 ms intra-flight, ≤1.5 s to edge C2

- Effective: D+5 1200Z; v4 sunset D+20 1200Z

- Reference: MOSA/FACE/UCI/CMOSS profiles[28],[29],[30],[31]

An API FRAGO like this is a tactics update: it tells units how to fight together through interfaces they can automate. It also gives test and training a clear target—validate conformance, measure latency, and cross-check outcomes.

cATO by design: guardrails at runtime

Continuous ATO is not a slogan; it’s an operational control system. We pre-approve pipelines, platforms, and patterns so units can ship on day one with inherited controls.[4],[8] RMF still governs, but we move the most important checks into runtime: dependency hygiene (CVE watch), SBOM drift, identity posture, and hazard-based monitors tied to mission outcomes.[8],[11],[12],[13],[14],[15],[16]

Three practical moves:

- Pipelines are the accreditation boundary. If your code flows through a known, signed pipeline with attested build steps, you inherit those controls by default. If that means 80% of the controls are from that pipeline, units focus on the 20% that’s mission-unique.

- Live risk dashboards. Tie CVE exposure, SBOM deltas, and telemetry-based hazards to an operational heat map. If a new CVE lights up a component in a mission thread, risk owners (those in ops, like commanders of maneuver elements on G-series orders, not just Chief Information Officers (CIOs) in cushy Pentagon seats) get the authority to pause or roll back.

- Red-team cadence as safety practice. The same way we do flight safety stand-downs, we run hazard drills against live stacks at a predictable tempo. ATO is now a verb, not a certificate.

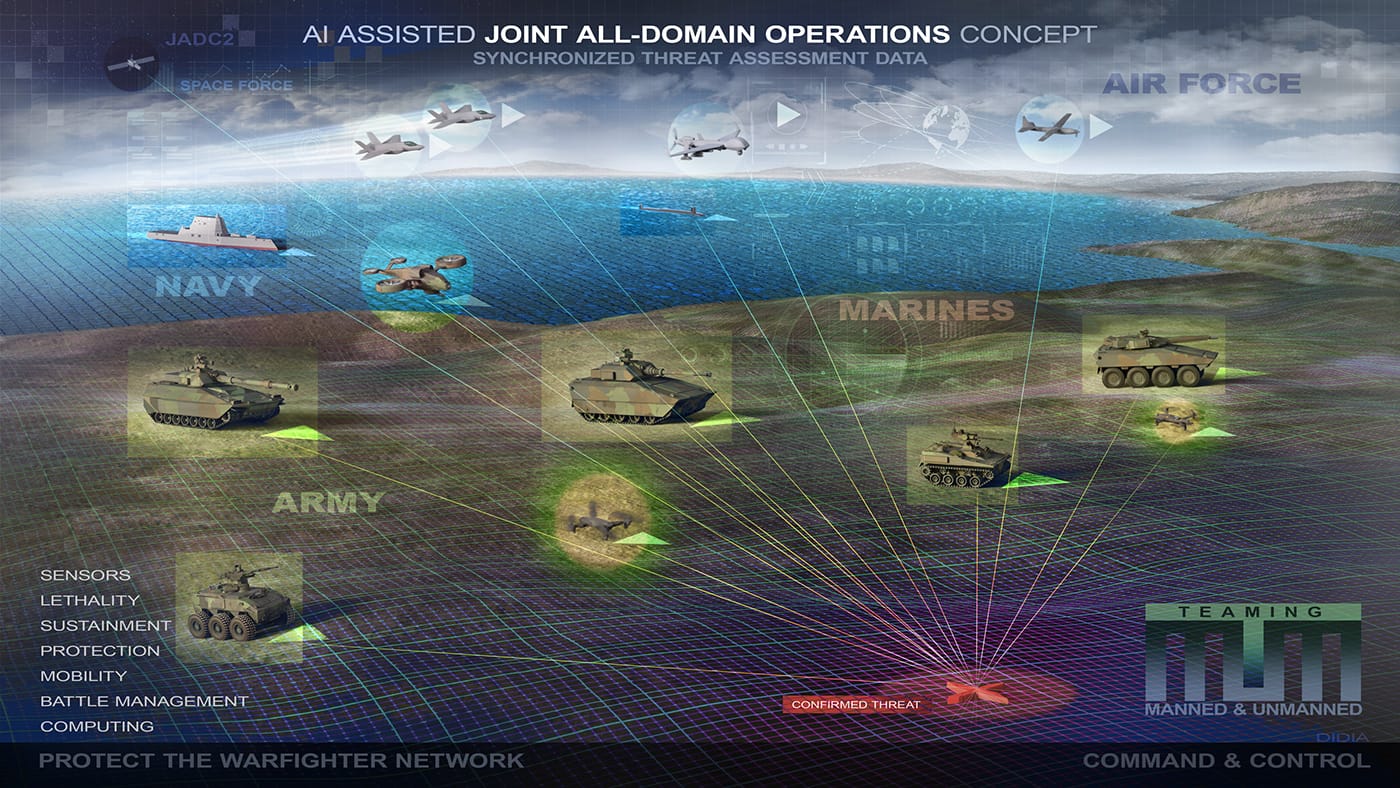

Bake JADC2 data contracts into TTPs

Today, our TTPs describe the “what” and “who” but hand-wave the data plumbing. That’s backwards. For any joint thread (ISR, fires, mobility, C2), TTPs must include a Data Contract Block:

- Producers & frequency: who publishes which message at what rate

- Subscribers & decisions: who consumes, to make which decisions

- SLO/Service Level Agreement (SLA): latency, jitter, freshness, and loss budgets

- Authority: who can change the schema and on what notice

- Security posture: classification, ZTA policy, cross-domain rules

You can write this in one page—and it makes the difference between “concept” and working kill chain [3],[23],[24] It also lets test and OT&E verify outcomes deterministically: if the SLO isn’t green, the tactic isn’t real.

Request software effects like fires

Leaders must get comfortable asking for software effects with the same precision as they request fires. Make Call-for-Software Effects (CSE) a standard report format—quick, unambiguous, and executable within hours:

CSE Nine-Line (sketch):

- Thread: (e.g., “CCA EW escort / AOR-W”)

- Observed issue: (“GNSS spoofing increased track dropouts >12%”)

- Desired effect: (“Hold ≥95% track continuity under spoofing”)

- Levers permitted: (model retune / threshold change / feature flag on / user interface (UI) tweak)

- Risk bounds: (no impact to fratricide guardrails; latency budget unchanged)

- Telemetry to collect: (list signals; label schema)

- Test gate: (what constitutes success; canary size; rollback trigger)

- SLA: (time to canary; time to theater)

- Authority: (mission commander / risk owner)

This is how we turn “I need help” into a change ticket that wins the sortie. It also generates clean artifacts for OT&E and ATO, closing the loop.

Tie OT&E to telemetry (or don’t do it)

Every trial and exercise should be a data sprint that immediately yields:

- A labeled dataset committed to the mission repository,

- A merged change set (code, model, or interface), and

- A TTP delta with measurable effects.[25],[26],[27]

If an event doesn’t produce those three, we should ask why we ran it. This is how we stop funding “demo theater” and start funding learning velocity.

A day-in-the-life example

A sUAS cell in "the western AOR" reports spoofing-driven drops on a maritime ISR thread (Part 2’s adversary playbook in action). They file a CSE. The software cell pulls last night’s telemetry, runs a targeted model retune, and ships behind a feature flag via an accredited pipeline (inheritance from either government owned or contracted cATO service providers). A canary on one flight proves the SLO; API FRAGO v5 goes out with a minor schema addition (EWConfidence). OT&E validates latency and continuity; the TTP entry is updated with the new tactic and rollback note. Total elapsed: 72 hours, not a POM cycle.

That’s the loop. Not hypothetical—just institutionalized.

What changes on Monday

To make this real without waiting on a new strategy document:

- Publish the first three API FRAGOs for one mission thread per wing. Keep them tiny. Enforce them ruthlessly.

- Stand up the risk dashboard tied to CVE and SBOM on that same thread. Give the mission commander pause/rollback authority. There are contractors already with ATOs and reciprocity that do this anyway.

- Run a 30-day CSE sprint. Require every squadron to submit at least one CSE, however small. Measure time-to-canary and time-to-theater.

- Tag your telemetry. Pick the five golden signals for the thread; standardize names and units; enforce in code reviews.

Do that once, end-to-end, and people will stop asking “what is JADC2?” because they will have used it.

How we’ll measure ourselves

Doctrine that doesn’t change budgets and behavior is just literature. For this loop, the scoreboard is blunt:

- Time-to-canary (CSE filed → first live test)

- Time-to-theater (CSE filed → wide release)

- Percent of sorties with feature-flagged behavior (learning at the edge)

- SLO attainment on data contracts (latency/freshness)

- Defect escape rate (how often we roll back; trending down is good)

- TTP churn (number of small, merged changes per quarter)

These metrics align directly with the references we already cite—DevSecOps pipelines, software modernization, cATO, and open standards[4],[8],[11],[28],[29],[30],[31]—and with the ethos of the series so far: move faster than the problem learns.

The heart of this section is cultural, not technical: we’re giving commanders a new muscle memory. Ask for software effects. Have a staff that can write orders as interfaces. Treat risk as a live signal, not a binder. And insist that every operation makes the code—and therefore the tactic—better. That is how a software-first force fights.

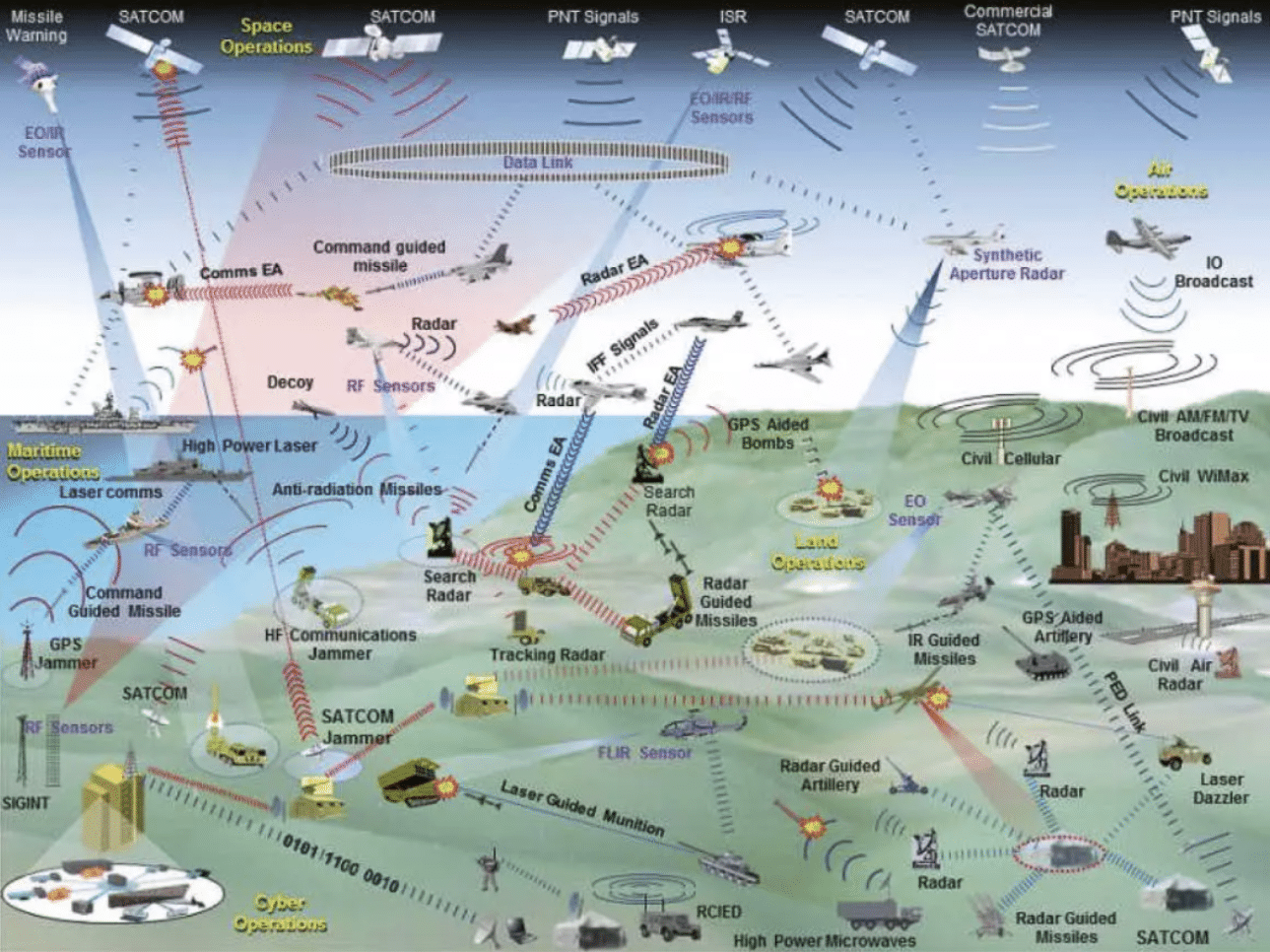

3) Fractal Airpower & Cheap Mass (sUAS)

Mass wins—when mass can learn. The center of airpower is shifting from a handful of exquisite aircraft to teams of inexpensive, updatable uncrewed systems that saturate sensors, complicate targeting, and trade software speed for hardware cost. Part 2 showed adversaries iterating cheap threats at scale. Part 3 examined how the current structure of software delivery is so bad, our premiere systems can't communicate with each other. This section turns that into force design: fractal formations of sUAS at the edge, commanded by humans and CCAs, stitched together by open standards, and defended by a living counter-counter-UAS playbook.

Why cheap mass, now

A million dollars of software updates across ten thousand airframes can out-pace a billion-dollar platform that can’t change. Attritable sUAS and CCA are already pointing the way: CCAs shift risk and add reach; sUAS give us density, deception, and strike opportunities that punish any adversary who presents fixed, expensive targets.[32],[33] Programs like Golden Horde (networked weapons behaviors) and Skyborg (autonomy core) were interesting experiments to prove we can compose effects from modular autonomy and comms, albeit program management for both programs could only be considered a failure given the results and management of the programs themselves.[34],[35] The logistics tail is catching up too; AFWERX's Agility Prime showed how to partner with commercial supply chains when it helps us move parts, batteries, and airworthiness faster,[36] albeit that was more about Grand Power competition.[37] The thesis is supported by evidence not merely in these US-based examples: scale beats exquisite when the code moves faster than the counter. The most obvious example of this is Operation Spider's Web where Ukraine inflicted massive asymmetric financial—and strategic—damage on Russia through the use of sUAS.[38]

These drones, costing $600–1,000, successfully struck aircraft such as the Tu-95MS and Tu-22M3—bombers worth billions that Russia uses to launch Kh-101 and Kh-22 missiles, respectively. - Kateryna Bondar

This is just commercial with open source software for fun. Forget Ukraine's Spider Web; what autonomy will be able to do at scale is a whole order of magnitude scarier. (Intel)

Fractal formations: team-of-teams by design

Build the force like a fractal—repeatable cells that roll up cleanly:

- Element (x4 sUAS): one sensor leader, one EW/disruption, one decoy, one shooter. Or any such combination as needed by the mission. Mesh by default; alt-nav and peer-recovery baked in.

- Flight (x4 elements): adds a CCA or manned teammate as the orchestrator and high-power relay.

- Package (x4 flights): composes mission threads (ISR → target → effects → battle damage assessment (BDA)) through published APIs.

This isn’t just formation math; it’s an API contract. The interfaces—MOSA, FACE, UCI, CMOSS—are the doctrine for how teams discover, authenticate, exchange, and act. If a vendor can’t emit/ingest the message set with the right SLOs, they’re not on the team.[28],[29],[30],[31] Keep the schemas small and versioned, and you can shift behaviors across thousands of airframes overnight. There's already an app (Fleetforge) for that which is fully hardware agnostic with unlimited government rights. Delivered on a Platform as a Service (PaaS) with an ATO and continuous delivery on commercial cloud.

A day-zero kit each unit needs

- Mission schemas and profiles (FACE/UCI/CMOSS) pinned to a version.

- Feature-flagged autonomy behaviors (approach, jam, feint, swarm split) with guardrails.

- Telemetry labels (golden signals) identical from sUAS to CCA, so learning scales.

- Canary tools to light up one element, then one flight, before pushing package-wide.

Counter-counter-UAS: plan to be jammed, spoofed, and hunted

The adversary gets a vote—and a jammer. We have to assume GNSS spoofing, uplink denial, and layered counter UAS (C-UAS) from day one. So we pre-bake resilience:

- EW hardening: frequency agility, low-probability comms modes, autonomy floors that keep formations useful when blind.

- Directed-energy integration: treat the tactical high-power operational responder (THOR) (the US Air Force's (USAF's) high power microwave (HPM) developed by the Air Force Research Laboratory (AFRL)), the US Navy's (USN's) high energy laser with integrated optical-dazzler and surveillance (HELIOS) (naval laser), and the US Army's (USA's) directed energy (DE) maneuver short-range air defense (M-SHORAD) (land laser) as friendly fires with published kill boxes and hold-fire logic so our swarms don’t ride into our own beams.[39],[40],[41]

- Deception as a TTP: dedicated decoy elements that present plausible signatures to burn the enemy’s magazine and decision time.

- Firmware agility: when they land a new exploit, we rotate keys, patch firmware, and swap behaviors inside days (if not hours), not quarters (or often years)—because the kill chain is now also a patch chain.[42],[43],[44]

Make this muscle memory: every C-UAS discovery yields a counter-counter update—a tiny change set to comms parameters, geofences, or autonomy thresholds—pushed through the same pipelines that carry software to jets.

Supply chains and firmware are lines of communication

Cheap mass fails if our parts and code are compromised. Treat supply chain and firmware provenance like fuel lines:

- Zero Trust for control links and ground stacks (identity, micro-segmentation, continuous posture checks).[7]

- Secure Software Development Framework (SSDF) processes for every vendor touching flight code or ground control station (GCS) binaries; no exceptions for “small teams.”[45] This only applies to software factory work (whether executed by government or contractor), not to dual-use technologies that go through the contracted rapid RMF rigor.

- SBOM at the edge (signed, queryable), plus an automated CVE watch that flags components in live mission threads when a vulnerability is exposed.[46],[47]

- Provisioning hygiene: hardware roots of trust, per-airframe credentials, revocation that works when disconnected.

- Spare-part discipline: motors, props, batteries treated as tracked configuration items, even if they are fully expendable. If a lot goes bad, we know which thousand airframes to pull.

This is dull until it saves a wing. It will.

Culture: permission to iterate in public

We don’t break cultural gravity with memos; we break it with visible wins. Pair that with top-cover pathways that pull quantity through the system: the Rapid Defense Experimentation Reserve (RDER) to stress test promising tech in real mission threads, and the Defense Innovation Unit (DIU) to scale what works with production contracts instead of indefinite pilots.[48],[49] Tie both to a clear message: we’re not buying “a drone,” we’re buying a learning curve.

When the author of this paper was working for AFWERX, he made this video[50]—apologies for the abrupt ending without a nice cherry on top—but it summed up the realities of near-peer use of sUAS and use of proxies to bleed the DoD's coffers dry. If anything, this video was a PowerPoint + Artificial Intelligence (AI) reflection of the efforts to tell the story of paper #3 in this series. While the video pre-dates that blog, the author was active duty at the time and trying to use it to tell stories inside the Pentagon. As those failed (but things like Operation Spider Web[38] and the $8.5t (trillion with a T)[51] wasted on the Global War on Terror (GWOT) are both reality, it turns out the video was accurate all along.). Now that the author is concerned more about getting this information out in mass than just pointing it to terrible leadership at former commands, this is just hosted for the public's consumption.

Tactics you can fly this quarter

- Edge-heavy ISR: sUAS elements create a moving picket line with local fusion; CCAs sprint to exploit contacts. Publish the ISR schema, enforce the SLO, and you’ve got a living net.

- Magazine depletion: decoy elements advertise appetizing signatures to pull enemy interceptors or C-UAS shots, while shooters trail at offset angles. Count cost-per-shot—we want them firing dollars at our pennies.

- Swarm escort: sUAS throw noise and false paths in front of CCAs during ingress; if EW bites, autonomy floor switches the swarm to pre-coordinated dead-reckoning patterns until the link returns. The pre-coordination can be constantly updated by AI at the edge until link failure, making enemy prediction modeling exponentially more difficult.

- Pop-up strike: cached mission packages (maps/models) live on edge nodes; a feature flag unlocks a new behavior for a specific target set without touching the rest of the stack.

Each tactic is a small interface + small behavior. That’s why it scales.

Training and OT&E: rehearse the interference

You fight how you test. OT&E must simulate the ugly: GNSS lies, satellite communications (SATCOM) dropouts, spectrum crowding, adversary spoof farms, and blue-force laser keep-out zones. Make every event yield:

- a labeled dataset

- a merged change set

- an updated TTP with measured effect

If a trial doesn’t produce those, we ran a pageant, not a practice.

Metrics that matter

Doctrine is what you resource. For cheap mass, the scoreboard is ruthless and public:

- Cost-per-effect (including spares & comms) vs. the enemy’s cost-per-shot.

- Time-to-patch (firmware or autonomy) from detection to fielding.

- SLO attainment on ISR/targeting schemas (latency, freshness, continuity).

- Attrition tolerance (fraction of mission completed under x% losses).

- Inventory churn (how many airframes or payloads we retire intentionally each quarter to keep the curve hot).

- C-UAS burn ratio (enemy shots per blue sUAS lost).[42],[43],[44]

If these numbers move the right way, you’re winning—even before the headlines catch up.

What changes on Monday

- Pick one thread (maritime ISR, border ISR, or base defense) and publish the v1 schema with SLOs. Enforce it across one wing.

- Stand up a sUAS cell with four elements and a CCA partner; give them a 30-day CSE sprint: one change per week, measured in the scoreboard above.

- Pre-wire directed energy into blue rules of engagement (ROE) and TTPs—THOR/HELIOS/DE M-SHORAD as planned effects with keep-out logic.[39],[40],[41]

- Turn on SBOM/CVE alarms for the sUAS/GCS stack; empower the mission commander to roll a canary when something lights up.[7],[46],[47]

- Buy for learning, not perfection: use DIU/RDER lanes to contract batches of 100–1000, not showcases of 10; plan for >20% to be retired in 12 months and for vendors to rapidly swap based on lowest total cost of ownership. Prevent vendor lock even if it means more work for program managers (PMs) and contracting officers (KOs) at the Program Executive Office (PEO).[48],[49]

- Brief the wing on failure math: we’re going to lose airframes and win campaigns. That’s the trade.

Cheap mass isn’t about throwing bodies at problems; it’s about throwing brains at scale—software brains that update faster than the adversary can target. Build the force fractally. Make the interfaces the order. Normalize directed energy and deception as routine teammates. Treat supply chain as a frontline. And then measure what matters: how quickly the swarm learns.

That’s airpower in an information-first fight.

4) DIME & Hybrid Warfare Strategy (Exploit Their Vulnerabilities)

Hybrid war is a campaign of campaigns. It’s not one silver bullet, it’s synchronized Diplomatic, Informational, Military, and Economic (DIME) threads that create compounding pressure tied to commander’s intent. We already declared this direction in the National Security Strategy and National Defense Strategy; the gap isn’t concepts, it’s cadence.[1],[2] Our Cyber Strategy adds the missing verb—persistent—and names the logic: shape, contest, and impose costs every day, not just during “incidents.”[17] This section turns that policy into a practical playbook: map the adversary’s load-bearing dependencies, apply legal/financial/info/cyber levers in parallel, and keep the costs on.

Target their load-bearing beams

Russia: energy leverage. Their power projection rides on hydrocarbons and the ability to coerce via supply. The European Union's (EU's) REPowerEU moves and the wider European energy diversification agenda showed how to turn that lever back on Moscow—re-route flows, blunt pricing power, and shrink the coercion window.[52],[53],[54] Make this structural: treat liquefied natural gas (LNG) contracts, pipeline chokepoints, and grid interconnects as operational terrain; bake energy stress tests into wargames and diplomacy so Moscow’s best play carries political risk every time.

The final paragraph in the Russian section of paper #2, published in August of 2023 read the following:

The US DoD cannot afford to wait until Russia potentially collapses demographically or economically as Russia is already well aware of these shortfalls and has pivoted to exploit US philosophies, doctrines and policies as a weakness in hybrid war. Russia cannot wait for the turmoil caused by the 2024 election season to come fast enough and use their hybrid tools to potentially alleviate the DIME pressures. Quite to the contrary, the US must continue to ratchet up the pressure until Russia doesn't just capitulate in Ukraine, but is deterred from destabilizing western interests in perpetuity. Russia's aims don't seek to make the world a better place, but rather just to make a select group of Russians even richer than they already are through the use of deceit and death. Until that model is destroyed, Russia's nationalistic demise must remain a priority.

None of that has changed.

PRC: manufacturing + capital pipelines. Their advantage is scale (manufacturing) and reach (capital/tech acquisition). We don’t beat scale with rhetoric—we beat it with guardrails and alternatives. Tighten advanced computing export controls to slow military-use tech transfer;[55] use Committee on Foreign Investment in the United States (CFIUS) authorities to shape the inbound/outbound capital that packages intellectual property (IP) for re-export.[56] Pair the stick with a carrot: onshore/ally-shore where it matters and standardize data contracts so coalition industry can plug in without bespoke integration costs (the doctrine from Section 2’s API FRAGOs). The goal isn’t autarky; it’s selective friction in the parts of the stack that convert to military advantage fast. China has a massive number of internal problems that will eventually force positive changes. These acts will keep China from leveraging their current advantages because imposition costs will always be higher than the outcome of rational actions.

Iran & North Korea: sanctions evasion and cyber financing. Both regimes use cyber to raise funds and bypass controls; our lever is financial plumbing discipline plus consequences that stick. Treat Office of Foreign Asset Control's (OFAC's) ransomware guidance as a standing order: push compliance and transparency through exchanges, insurers, investor relations (IR) firms, and managed service providers (MSPs) so the easy off-ramps close.[57] Combine that with public attributions and hunt-forward finds (see below) as well as Black List Letters of Marque 2.0 activities (see below) to keep their cost of doing business rising.

Keep “hack the voter” on the board

We learned the hard way that targeting the voter and the attention algorithms is cheaper than targeting the ballot box.[58],[59],[60],[61],[62],[63] In Part 2, we walked through how Russia and others pair narratives with cyber theft/leaks to generate cycles of outrage on platform rails; in Part 5, we argued for moving from ticket-clearing to campaigning with allies. Keep that energy: make defend forward the default posture—shape the environment before the news cycle starts.[18] That means:

- Pre-bunk, not just debunk. Build content libraries and media partnerships that explain the playbook before it runs.

- Authenticity infrastructure. Verify provenance at machine speed for high-risk narratives (e.g., deepfakes tied to election timing).

- Information Operations (IO) + cyber pairing. When theft plus leak is the pattern, our counter is resilience ops (reduce the leak’s half-life) plus counter-exposure (burn the adversary’s TTPs in public to degrade future effect).

Algorithms are terrain—seize the choke points

Treat platform ranking, recommendation, and ad delivery as maneuver terrain. You don’t control it, but you can shape it:

- Truthful amplification: pack authoritative narratives into formats the ranking systems reward (consistency, velocity, interaction).

- Latency as a weapon: use pre-approved message kits so surrogates can flood the zone in minutes during a crisis, not hours.

- Friction to malign ops: coordinate with platforms on rapid throttles for known playbooks (coordinated inauthentic behavior, boosted hacked materials) while staying inside U.S. speech constraints.[62],[63]

The test isn’t vibes; it’s reach vs. reach: how fast we get authoritative context in front of the same audiences the adversary is buying or botting.

Pre-position Hunt-Forward teams with allies

US Cyber Command's (CYBERCOM's) hunt-forward operations are the most successful “small footprint, high leverage” tool we have: forward teams gain TTPs, indicators, and tradecraft straight from adversary networks, and share them back into U.S./ally defenders at speed.[65] Institutionalize the rhythm:

- Standing invitations with priority partners tied to election cycles, energy events, or major exercises.

- Telemetry return as a deliverable—TTP packages published in hours to CVE/SBOM pipelines, not white papers months later. We'll expand this more in section 12 of this paper.

- Reciprocity: allies get early warning and tooling; we get ground truth that makes our defend forward posture real.[18]

Build and use a DIME Tasking Order (DTO)

Treat DIME like air tasking: a weekly DTO that aligns diplomatic asks, information ops, military actions, and economic/legal moves to a single commander’s intent. Understand that while the President and Congress ultimately control that intent, the Unified Combatant Commanders (UCCs) are "moving and shaking" across DIME significantly, and need to significantly more. A DTO page might look like:

- Intent: Raise the marginal cost of Russian energy coercion through Q3 while preserving allied supply resilience.

- Diplomatic: EU energy consultations + targeted assistance for interconnect upgrades.[53]

- Informational: pre-bunk content series on energy blackmail tactics; coordinate release windows with allies.

- Military/Cyber: hunt-forward on energy sector partners; publish hard-won TTPs into CVE-driven patching drills.[17],[65]

- Economic/Legal: enforce price-cap compliance; update export control frequently asked questions (FAQs); CFIUS signaling on sensitive deals.[55],[56]

The DTO is how we replace “everyone doing good things” with effects that stack.

Codify a deterrence ladder for cyber + info ops

Deterrence here is not a one-shot threat; it’s a transparent ladder of consequences that moves across domains and stays below unintended escalation. Anchor it in the DoD Cyber Strategy (persistent engagement) and the earlier 2018 cyber strategy (defend forward).[17],[19] A usable ladder:

- Silent friction: blocklists, takedowns, and behind-the-scenes demarches.

- Attribution + exposure: name responsible units, publish TTPs, and burn infrastructure in public.

- Financial/legal squeeze: targeted sanctions, export control denial, CFIUS signaling, and secondary risk warnings for enablers.[55],[56],[57]

- Cyber counter-effects: proportional, reversible disruption of the hostile campaign infrastructure tied to clear redlines.[17]

- Cross-domain costs: visible exercises, posture adjustments, and, if needed, conventional responses that signal risk to what the adversary values.

Publish the rules, log the steps, and climb deliberately. Ambiguity helps the offense; ladders help the defense.

Letters of Marque 2.0: Bounties, Guardrails, and Continuous Cost-Imposition

If we’re serious that the civilian software market is the arsenal, then we need lawful ways to task and pay that arsenal for effects—defensive and offensive—without conscripting everyone into government payrolls or slow-rolling them through bespoke compliance obstacle courses. The 18th-century mechanism already exists in our constitutional toolkit (letters of marque and reprisal, specifically in Article I, Section 8, Clause 11). We modernize it for cyberspace, wrap it in contemporary law and alliance norms, and make it measurable. Think privateering, but with SBOMs, CVE/KEV, and JADC2, not sails.

We stand up four transparent lists—each with a public charter, published bounty schedules, and harsh guardrails. The point isn’t vigilantism; it’s to operationalize the civilian arsenal inside a strategy that’s (1) legal, (2) controllable, (3) auditable, and (4) fast.

The Blue List (build the tools)

This isn’t a target list—it’s a fee schedule for capability. We pay developers to produce, maintain, and safely custody government-directed exploit chains mapped to CVEs/KEVs and priority emulation plans. We prefer open-source scaffolding under permissive licenses (so we can security-review and fork if needed), but we control release and use under contract. The deliverable isn’t a “zero-day grenade”; it’s a tested module with reproducible build, SBOM, usage predicates, and a lawful-use wrapper that binds it to authorized operators, mission contexts, and rules of engagement. Blue List work folds into red-team programs, hunt-forward kits, and cost-imposition options that live under U.S. authorities and coalition legal frameworks (defend-forward isn’t a bumper sticker; it’s a pipeline).[17],[18],[19],[45],[46],[47],[123]

The White List (protect the commons)

Here we fund continuous open source software (OSS) supply-chain hygiene—looking for dependency poisoning, typosquats, malicious updates, and CI/CD compromise in the libraries we all rely on (OpenSSL-class projects, container bases, crypto libs). No per-find “bounty” here; we pay for coverage and dwell-time reduction: monitored package sets, mean-time-to-detect, and coordinated disclosure velocity. White List contractors push fixes upstream, generate attestations, and publish risk advisories that our cATO lanes can consume automatically.[5],[45],[46],[47]

The Red List (find and fix ourselves)

This is a bounty schedule for U.S./allied infrastructure—critical services, DoD enclaves, defense industrial base (DIB), base networks—the places that keep ACE alive. Red List pays more than Blue because dwell-time here is lethal. Rewards are tied to patch availability + deployability: you get paid more if you deliver a fix or configuration that our lanes can push immediately (with rollback tested) and if you supply detection content and forensics playbooks. Payment also scales with blast-radius avoided (rewarding responsible disclosure paths that minimize exploitation risk).[5],[6],[7],[44],[81]

The Black List (cost-imposition targets)

This is the only actual target list, and it is overt once published. Getting on it is not. Nominations flow to the National Security Council (NSC); State, Defense, and Justice jointly validate; a Foreign Intelligence Surveillance Act (FISA) court reviews the package for lawful scope and minimization; Congress (specifically the Senate, through the Senate Select Committee on Intelligence) authorizes issuance under an updated cyber letters-of-marque statute. Up to publication, everything is classified. After publication, any licensed privateer (read: bonded, cleared vendors under standing contracts) can compete to lawfully degrade the listed entity’s capabilities in tightly defined bands (disruption, not destruction; strict Law of Armed Conflict (LOAC)/Tallinn compliance; human-safety carve-outs; no critical-infrastructure spillover) with escrowed digital-asset payouts on verified effects. “Transparent to the world, auditable to the government.” That means privacy-preserving but compliant rails—Treasury/OFAC guardrails apply; payouts are pseudonymous to the public but fully traceable to U.S. oversight. No cowboys, no crime-as-a-service; licensed firms only, revocable charters, real penalties.[17],[18],[57],[124] While the other three lists don't really require Letters of Marque legislation and can be implemented using consortia other transactional agreement (OTA)/indefinite delivery, indefinite quantity (IDIQ) agreements/contracts, the Black List will require legislative action.

A few hard rules keep this from turning into a tragedy of the commons:

- Deconfliction with CYBERCOM and allies is real-time. If a Blue/Black action collides with an in-progress operation or intel source, the stoplight turns red and the chartering authority pauses payment until conflict resolves.

- No “stockpile and pray.” Blue List modules have expiry and review cycles; if a CVE/KEV fix lands and defenders patch, we retire or repurpose.

- Civilian protections and human safety are non-negotiable. Effects that risk physical harm, medical systems, or public safety are out of scope unless explicitly authorized with additional safeguards (rare, and under military command).

- Allies first. If a listed entity has infrastructure in a partner state, we use consent-based playbooks; Black List isn’t a hall pass to create diplomatic incidents.

- Metrics or it didn’t happen. We score: vulnerability dwell-time, time-to-patch on Red finds, OSS coverage on White, effect-per-dollar on Black (with collateral-risk score at zero), and collision rate with ongoing ops (should be vanishingly small).

In practical terms, Letters-of-Marque 2.0 helps us do what we already say we’re doing—defend forward and impose costs—but at market speed, with broader hands and narrower risk. It pays Blue to keep the toolchain ready, pays White to keep the commons clean, pays Red to make our house safer, and pays Black to make an adversary’s day worse—all inside law, with telemetry, timelines, and a published ladder of consequences.

How we run this on Monday

- Name three adversary dependencies per theater (e.g., refinery throughput, satellite links, capital controls), and assign a DIME owner per dependency.

- Stand up a DTO cell inside the UCC with officers from State, Treasury, DoD, and the interagency; one 2-page DTO every Friday.

- Wire intel to action: require that every Hunt-Forward product triggers a CVE/SBOM check across affected sectors within 72 hours.[46],[47],[65]

- Election clock discipline: 90 days before key votes, pre-bunk packages and authenticity tooling are staged with platforms and allies, ready to flood commercial algorithms through both official and proxied channels with truth data as opposed to foreign state propaganda that takes advantage of narrowly optimized algorithms for emotional engagement to increase revenue.[58],[59],[60],[61],[62],[63] Data output can be done both through burnable networks stood up via containerization in commercial sectors and via official press releases through Public Affairs (PA).

- Measure what matters: time-to-DTO effect, adversary campaign half-life, price-cap compliance rates, reach-for-reach ratios on major narratives, and cost-per-marginal-attack imposed by our controls.[1],[2],[17]

- Begin draft legislative activities for Letters of Marque 2.0.

Hybrid war rewards coordination speed. If we align DIME-scale actions to commander’s intent and run them on a DTO cadence, we stop treating adversary strengths as facts of nature and start turning them into load-bearing liabilities—every week, on purpose

5) OODA Across the Enterprise (Acquisitions, Research, Development, Test, Evaluation and Sustainment)

OODA is no longer a cockpit trick; it’s an institutional metabolism. The organizations that learn fastest win—even when their platforms aren’t the fastest. That’s the plain reading of the last decade of software-in-defense, from the Defense Innovation Board’s diagnosis (ship smaller changes, more often) to the Air Force’s “Accelerate Change or Lose” and follow-on Action Orders.[27],[66],[67] This section is about turning that mantra into mechanics you can run every week across acquisition, research & development (R&D), and test.

Collapse Observe/Orient

Observe isn’t a brief; it’s telemetry. Every operational thread—air, space, cyber, logistics—should emit event streams and traces that flow into common stores instrumented for model ops (data versioning, lineage, drift detection). Pair that with two outside-in feeds that must be treated as first-class citizens:

- Known Exploited Vulnerabilities (KEV) watch: treat Cybersecurity and Infrastructure Security Agency's (CISA's) KEVs like a standing frag order. If a KEV touches anything in your mission thread, a patch/mitigation SLO clock starts within hours, not quarters.[47] While the MITRE CVE list is our development standard we hold our own software against during dev cycles, the KEV list becomes a 5m target set: these are beyond zero days and into the realm of script-kiddies; KEVs are going to be employed by organizations at the tactical edge, not just strategic state actors like GRU Unit 26165 and Unit 74455 or PLA Unit 61398 who engineer zero days for advanced persistent threat (APT) vectors.

- SBOM intelligence: suppliers publish SBOMs; we continuously match them to KEV/CVE and vendor advisories so orient is automated, not manual. When the dependency graph moves, your risk picture updates without a meeting.[47],[68]

The point isn’t more dashboards—it’s fewer surprises. When logs, model metrics, KEV hits, and SBOM deltas live in one fabric, “orient” is a query, not a tiger team.

Shorten Decide

“Decide” should be bounded authority plus fast paths, not heroics. We already have the statutory tools; we just don’t route enough decisions through them:

- Use the fast lanes deliberately. We've already made the software acquisition pathway (SWP) the preferred acquisition tool for software.[69] The SWP intentionally leverages some of the most flexible capabilities from MTA—the commercial solutions opening (CSO) atop the OTA[9]—to make software acquisition faster, but the cybersecurity onboarding must accelerate as well.[11],[70]

- Empower PMs with pre-approved patterns. Give PMs authority to ship inside guardrails (DevSecOps pipeline, cATO inheritance, data contracts) without staging milestone theater every sprint.[8] The commander on G-Series orders will take a standardized body of evidence (BOE) to determine if they will use the new code; PMs and AOs are now lethality-enablers supporting a command staff, not gate-keepers beholden only to a CIO-driven hierarchy.

- Codify decision SLOs. Example: “If an increment is within MTA thresholds and uses the approved pipeline, the default decision is ‘go’ in ≤10 business days.” You can’t outrun delay with more slides; you outrun it with default-to-yes rules that leadership must actively override.

Part 1 framed the economy we actually have—software, data, networks—and Part 4 argued for hazard-based control; “Decide” is where those meet: leaders decide once to trust patterns, then stop re-deciding every deploy.

Shorten Act

Act is shipping—small, safe, continuous. Two ingredients make that possible at enterprise scale:

- Commercial plumbing: pre-stage multi-cloud landing zones with identity, logging, and service mesh so teams deploy to compute-as-utility, not snowflake stacks.[71],[72] Tie this to the DoD Software Modernization Strategy and the DoD DevSecOps Reference Design so pipelines are boring, repeatable, and inherited, not artisanal.[4],[8]

- S-curve releases: ship features behind flags; roll via rings (canary → squadron → wing → theater). If a KEV/CVE spikes or telemetry shows regressions, rollback is a toggle, not a memo.

When the platform is an S-curve, your Act loop runs on engineering cadence—not POM cycles.

Make PPBE Pay for Learning

You get the behaviors you budget. Right now, PPBE pays primarily for starts and sustainment, not learning velocity. The fix is mechanical:

- Define budgetable software effects. Treat deployed code, retired code, and telemetry coverage as deliverables whose acceptance triggers obligation/expenditure. Killing bad software should book as a win, not a loss.[20],[21],[22]

- Adopt outcome measures that cross threads. Time-to-patch CVE, time-to-field (MTA/UON/JUON),[73],[74] T2D (JADC2 data path)[4],[8],[72] and cost-per-effect (e.g., CCA/sUAS in Sec. 7) become the portfolio scoreboard.

- Resource the boring plumbing. Fund shared pipelines, common data models, and test ranges as infrastructure—not as “nice to have” line items that get raided in execution.[20]

We don’t need new poetry here. The PPBE Commission already laid out the direction; our job is to wire the accounting to the learning.[20]

Turn OT&E into a Continuous Test Fabric

The current OT&E muscle memory: a big event, a big report, a long wait. That’s incompatible with software velocity. We need test as a fabric:

- Instrument everything. If an exercise doesn’t generate labeled datasets and failure modes that feed back into code within days, it’s theater. DoDI 5000.89 and 5000.90 give you the hooks to require telemetry-driven evaluation and program management that plans for it.[25],[26]

- Shift left and right. Use synthetic ranges/digital twins in dev when possible, then carry the same scenarios into live ops so results are comparable and regressions obvious.

- Publish reusable artifacts. Test threads should end with datasets, scenario packs, and model benchmarks that other units can run—once made, used many times.

This isn’t anti-rigor. It’s the only way to keep rigor while the world moves.

The Eight Clocks

Every headquarters should see—at a glance—eight clocks that define enterprise OODA:

- Time-to-effect: Delta time for mission capability; this is typically a UI enhancement or other such impact on a mission thread, but is measured in seconds towards outcomes that impact the commander's ultimate value stream: warfighter effects.

- Time-to-detect: from incident/CVE/KEV publication to alert in the mission thread.[47]

- Time-to-patch/mitigate: from alert to fix in production, measured per system and supplier.[46],[47]

- T2D: from validated requirement to funded path (MTA/UON/JUON/Other).[73],[74],[75]

- Time-to-field: from funded path to capability in user hands (release ring cadence).[4],[8],[72]

- Time-to-rollback: from anomaly to safe state (feature flag or config)

- Time-to-deprecate: from decision to retire to last user off (technical-debt burn rate).[20]

- Telemetry coverage: % of mission thread emitting the agreed event schema (observe/orient health).

If a capability improves these clocks, it’s winning—even if the slide is boring. If it doesn’t, it’s noise.

Monday Morning Version

- Mandate CVE/KEV/SBOM integration in every pipeline by the end of the quarter; publish the SLOs and measure them weekly.[46],[47]

- Default to MTA/UON/JUON for software increments under defined thresholds; publish the thresholds and train PMs and their associated KOs on how to apply them.[73],[74]

- Stand up a commercial oriented “standard stack” (identity, logging, service mesh, flag service) and make it the only allowed landing zone for new code unless waived at the PEO level.[4],[8],[72] Use contractor owned, contractor operated (COCO) model for onboarding commercial dual use technology, and separate stacked COCO model for developing government owned IP for government-only problems. Both use the same exposed API model to integrate effects, but are separate contracts managed from independent PEOs to prevent a single monolith from taking over the API models. We must prevent the mistakes made with data ownership in the Maven contract from happening again.

- Adopt the Eight Clocks as the portfolio dashboard and tie quarterly reviews to movement on those clocks.[20],[21],[22]

- Rewrite OT&E tasking so every event must produce datasets/benchmarks that feed a backlog within 10 business days.[25],[26]

Tie-backs to the series

- In Part 1 we argued we should organize around information/software/networks as maneuver. OODA-as-metabolism is how you operate that organization.

- In Part 2 we mapped adversaries who already cycle fast (sanctions evasion, energy leverage, information ops). You don’t out-message them with slower loops.

- In Part 3 we showed that acquisitions policy is no longer the bedrock of American supremacy, but is actually the reason we're falling behind our peers despite exceptionalism in the commercial sector; Section 5 is the leadership plumbing that turns this around while modernizing the department to align with commercial advantages.

- In Part 4 we showed that acquisitions policy alone won't usher in the future; we have to reframe how we think about TTP. Here, that becomes runtime guardrails around shipping, not gates that freeze learning.

Speed of flight still matters. But speed of learning beats speed of flight. If we wire CVE/KEV/SBOM into observe/orient, route decisions through MTA/UON/JUON acquisitions, act on COCO pipelines, pay for deprecations, and make OT&E a fabric, we stop admiring OODA and start living it—across the enterprise, every week.

6) Swarm-on-Swarm, Directed Energy & Micro-EMP

Treat the swarm like combined arms, not a gadget problem. The force that layers low-cost kinetic, EW, cyber, and deception by cost-per-effect will win the attrition race against mass sUAS and attritable CCAs. That means we build an effects stack where cheap counters meet cheap threats first, reserving exquisite shots for exquisite targets—and we wire this stack into the same software-centric loop we laid out above.

Swarm-as-Combined-Arms (by cost-per-effect)

Publish a shot doctrine: who fires first, at what range, and at what density:

- Deception & cyber pre-shot: spoof, saturate, and feed garbage—force the adversary’s autonomy to chase ghosts; burn their batteries and operator attention before we burn our magazines.[42],[43],[44] They will be doing the same thing to us, so its imperative to have superior AI software churn rates to win this skirmish every time.

- EW as the workhorse: deny C2 links, jam GNSS, and wring guidance stacks until they fall into soft-kill envelopes. While EW has the best cost curve for mass sUAS, particularly when paired with decoys that pull swarms off defended routes,[42],[43] it can be overcome in many ways, ranging from exquisite autonomy—that is still pennies per sUAS on the edge when at mass—to hardening.

- Directed energy for volume kills: when density spikes, lasers and HPM systems reset the economics, turning multi-$k threats into multi-$ per shot defenses—if we pre-position power, cooling, and clear lines of sight.[39],[40],[41] Not a tactical panacea—often a life-saver for agile combat employment (ACE) deployments against scaled low-cost sUAS attacks.

- Phased kinetic as the backstop: guns, air-to-air missiles (AAMs), and interceptors finish what soft-kill and DE don’t. Air-to-air guns delivered from low-cost sUAS becomes both a cost effective way to attrit one way attack (OWA) sUAS, but also is incredibly effective to "pave the track" for swarm effects to make it to their target, neutralizing enemy interceptors ahead of the main effort.[76] For C-UAS, air-to-air sUAS interceptors become an effective low-cost defensive counter-air (DCA) doctrine with a recoverable asset able to operate for pennies per engagement. Even in this scenario, save the expensive arrows for the targets that merit them.[76],[77],[78]

This is not theory. DoD's C-UAS assessments and strategy already point to the integration challenges and coordination gaps; we fix them by commanding the cost curve as a tactic, not a PowerPoint .[42],[43],[44]

Directed Energy as the Economics Breaker

HELIOS at sea, DE M-SHORAD on land, and THOR for base defense give us scalable counters when the sky is busy and magazines are thin.[39],[40],[41] Three practicalities matter more than swagger demos:

- Power choreography: DE fights are logistics fights. Publish power budgets and recharging concepts in ACE playbooks; co-locate mobile generation with DE nodes so they’re not hostage to a single feeder.[79],[80]

- Thermal SLOs: track duty cycle, dwell, and cooling as operational metrics. A laser that can’t manage heat mid-salvo is just an idea.

- Fire control fusion: DE must sit inside the same sensor-to-shooter micro-loops as guns and EW. That means common event schemas and latency SLOs, not custom stovepipes.

When DE is doctrinally first against mass drones, the rest of the stack lasts longer and costs less.

EMP/GMD as Infrastructure Hygiene

Treat electro-magnetic pulse (EMP) and geomagnetic disturbance (GMD) protection the way you treat patching—boring, continuous, and non-negotiable. ACE only works if bases, feeders, and regional grids ride through shocks and keep the pipes up.[44],[81] That means:

- Hardening tiers: tier critical C2, fuel, and cooling circuits for survivability; lower tiers ride on portable spares and fast-swap modules.

- Recovery drills: practice black-start and microgrid transitions during ACE reps; success is measured in minutes to ops-recovered, not anecdotes.

- Sensor truthing under stress: validate that navigation, timing, and friend/foe discrimination hold up under EMP/GMD-like conditions—not just sunny-day ranges.

Firmware Agility as a TTP

The munition is software. Treat firmware agility like re-arming: push counter-update cycles that beat the adversary’s patch tempo.

- Bake in SSDF patterns so autonomy stacks and datalinks are built for change (versioned configs, signed updates, rollback paths).[45]

- Demand SBOMs from suppliers and map them to CVE/KEV so we know which component in which bird is exploitable today.[46],[47]

- Sign & surge: pre-approve signing services and distribution channels in cATO pipelines so a hot-fix to a guidance filter or RF front-end ships in hours, not quarters.

If you can’t update it at operational tempo, you don’t own it—you rent it from yesterday.

Train Deny–Deceive–Deplete

Mass sUAS warfare is as much economics as kinetics. Build exercises that bleed the wrong magazines and burn the enemy’s time:

- Inventory-burn traps: set vignettes where blue must choose between shooting $100k interceptors at $2k threats or maneuvering into EW/DE kill boxes. Grade on cost-per-salvo and time-to-rearm, not just kills.[42],[43]

- Deception lanes: practice decoy blooms, ghost corridors, and false electromagnetic signatures to waste adversary swarms. Build swarm based TTPs to utilize physics restrictions at time/distance intervals for defeating close-in weapons systems (CIWS) opening up corridors for exquisite systems to neutralize capital targets.

- Shot-selection drills: give operators a “wallet” and make every trigger pull debit the budget in real time. Leaders learn quickly when the UI shows they blew half a million dollars to swat quadcopters.

This is culture work. When crews can see cost-per-effect during reps, they start fighting the budget the way they fight the air threat.

Lessons from Ukraine: EW First, C5ISR Fragile

Ukraine is a running lab on C5ISR degradation and EW saturation. The early and ongoing takeaways are clear: links die, GPS lies, and centralized C2 slows you down .[82],[83],[84],[85] Translate that into our TTPs:

- Autonomy bias: default to behaviors that degrade gracefully with intermittent comms—local mesh, mission intent packets, and time-boxed autonomy rather than constant C2.

- Navigation diversity: fuse inertial measurement units (IMU)/vision/radio-nav so GNSS denial can at best force drift, not loss.

- Edge triage: push targeting and prioritization logic down to the node—if the link to the “big brain” breaks, the swarm still fights the right fight.

- Telemetry minimalism: log what you need to learn but avoid chatty links that crater under EW pressure.

The doctrine shift is simple: assume contested C5ISR, prove otherwise—then design the swarm to win anyway.

The Civilian Arsenal, Wired to This Fight

Per Part 5, the American civilian software world is the arsenal—and most of the coding will be done by contractors. Swarm warfare doubles down on that reality:

- Don’t fork industry’s value stream. Use contracted middleware and secure gateways to bring containerized, cloud-native autonomy and C2 into classified environments without forcing vendors to rewrite for bespoke stacks. Inherit controls via cATO patterns; certify pipelines and data paths, not each product from scratch.[4],[8]

- Buy effects, not brands. Specify latency to classify, kills per kilowatt, mean time to counter-update, EW survivability indices. If one vendor slips, another that meets the interface and the metric can slot in, giving the government a trackable way to decrease total cost of mission effect at speed and scale and force vendor competition.

- Pay for speed. Use MTA and the Software Acquisition Pathway to award increments on delivered telemetry and cost-per-effect improvements, not slide milestones (we’ll expand this later).[73]

The point is to keep the venture capital (VC) engine hot and the internal research & development (IRAD) flowing by making it easy to cross the cATO Rubicon fast, at scale—then easy to swap when someone better shows up.

Monday-Morning Pieces

- Publish a counter-swarm shot doctrine with cost-per-effect tables and DE/EW primacy.

- Stand up DE power/cooling kits as ACE cargo, with SLOs for spin-up and duty cycle.[39],[40],[41],[79],[80]

- Add firmware agility checklists (SSDF + SBOM + CVE/KEV) to every sUAS/CCA pre-flight and after-action workflow.[45],[46],[47]

- Build deny–deceive–deplete lanes into every major exercise; score them on economics, not just effects.[42],[43]

- Force contested-C5ISR assumptions in planning factors; prove you can fight through EW before you brief the happy path.[82],[83],[84],[85]

Swarm-on-swarm will not be won by the shiniest single counter. It will be won by system economics: who spends least to remove most threat—reliably, updatable, and at scale. DE resets the math; EW and deception keep it tilted; firmware agility sustains it; and a civilian-powered software pipeline keeps it moving faster than the enemy can learn.

7) Million-Plane Air Force & CCA/Replicator

The future force isn’t one exquisite airplane—it’s a fielded distribution of several CCAs and thousands upon thousands of sUAS per AOR, stitched by open interfaces and updated like software. Quantity gives us geometry, persistence, and the ability to trade steel for information advantage. MOSA makes that quantity smart—so sensors, EW payloads, and autonomy can spiral in months, not blocks of years.[28],[29],[30],[31],[32],[33]

The force-structure math

Start with the mission math, not the platform myth. An AOR-scale scheme might allocate: ISR/ELINT pickets to find and fix; decoy/jammer swarms to fracture enemy kill chains; armed sUAS "fighters" to pave the avenue; weapons mules to mass cheap effects; and a CCA “skein” to reach where manned aircraft won’t.[76] The point isn’t a magic number—it’s density: enough airborne nodes that an adversary can’t attrit you faster than you regenerate. MOSA gives you the knobs: payload bay standards (FACE), message schemas (UCI), and card-level modularity (CMOSS) so we can add a new seeker, swap a radio, or change autonomy without resetting the whole fleet.[28],[29],[30],[31] Commanders get an effects portfolio they can rebalance daily: more ISR today, more decoys tomorrow, more EW when the enemy lights up.

Pilot to swarm-commander

We graduate pilots from stick skill to mission intent for teams of systems. We already do this to a lesser extent; the F-22 is an easier-to-fly plane for standard flight than a C-172 Cessna because the expectation is the pilot is focusing on winning the fight, not worrying about flight mechanics.[86] Abandoned projects like Skyborg and Golden Horde were doctrine seeds: onboard autonomy with human commanders setting goals, guardrails, and target priorities while the machine handles formation, routing, deconfliction, and timing.[34],[35] On the glass, that looks like tasking by verbs—screen, fix, blind, suppress—plus constraints (collateral, spectrum, ROE) and SLOs (latency, dwell). The operator calls the play; the swarm runs it; telemetry closes the loop in minutes, not months.

Replicator/RDER: quantity on purpose

We stop pretending attrition won’t happen and design for it. Use Replicator and RDER for volume and iteration: short learning cycles, fast tooling, and block upgrades; award production to those who improve cost-per-effect each quarter, not to those who perfect a static spec.[45],[49] Accept higher production tempo with statistical airworthiness and a bias for fieldable today over perfect tomorrow. Keep vendors in a race on common interfaces; if a supplier slips, another drops in with no mission pause (MOSA again).

The SBOT

Hardware scales the body; software scales the brain. We institutionalize an SBOT—a signed, versioned bundle that travels with each mission family:

- Models & behaviors: perception nets, target selectors, route planners, EW playbooks, fail-safes, geofencing, and “graceful degradation” states.

- Policy cages: ROE encoders, no-go lists, human-on-the-loop checkpoints, and abort logic.

- Data contracts: feature schemas, timestamps, confidence scoring, and latency SLOs for every pub/sub edge.

- Safety & supply-chain: SSDF patterns, SBOMs for autonomy stacks, and provenance metadata tied to signing keys.[4],[8],[45]

- Test artifacts: sim scenarios, red-team seeds, and flight logs needed to roll forward or roll back with cATO inheritances (much more about this below in Section 12).

Treat SBOT like munitions: inventory it, inspect it, and update it at operational tempo.

Logistics for a million

Mass only matters if it sustains. But sustainment is a new action when the inventory is a mass of disposable plastic.

- Batteries as ammunition. Plan state-of-health (SOH) telemetry, palletized chargers, and swap SOPs into ACE playbooks. Stage chemistries by climate; recycle at theater hubs.

- Airworthiness at scale. Move from bespoke flight releases to airworthiness-by-constraint: a certified operating envelope (wind, icing, gross weight, autonomy mode) that vendors must prove in either real-world testing or digital twin sim and sample flight, with continuous telemetry checks tightening or expanding the live envelope over time.

- Spectrum as airspace. Publish a Spectrum ATO daily: frequencies, power, dwell, and emissions etiquette per mission packet. Automate deconfliction so sUAS/CCA networks don’t jam ourselves—and can gracefully reroute under enemy EW.

- Spares automation. Depending upon cost thresholds, design to two-deep line replaceable units (LRUs) and a digital thread from serial number → failure mode → next-best-spare. Use predictive maintenance on motors/props/etc; print plastics at the edge when wise, but centralize complex spares where yield matters. The LRU requirement not a threshold requirement, but an objective for modular systems above a PEO-specified amount. As an example, extremely low cost ISR quad-copters for use by operators at the tactical edge are not apt for LRU-specific replacement, but are just a totally expendable asset.[87]

- Zero Trust for the fleet. Every drone is a compute node; treat C2, update, and telemetry channels as untrusted by default (identity, segmentation, continuous attestation).[7]

- Base defense integration. Align C-UAS and infrastructure hardening with swarm ops so our own mass doesn’t blind our own sensors. This is painfully clear when dealing with critical infrastructure.[44]

Exportability and coalition teaming by design